——— BUILD STAGEEngineering meets

domain expertise

The technical team began building. They ingested over 140 documents and implemented RAG (Retrieval-Augmented Generation) workflows to address user questions in natural language.

But we quickly realized raw document ingestion wasn't enough — the content was deeply specialized, and the AI needed more context to be useful.

I proposed weekly one-hour whiteboarding sessions with KM subject matter experts to give the system the context it really needed.

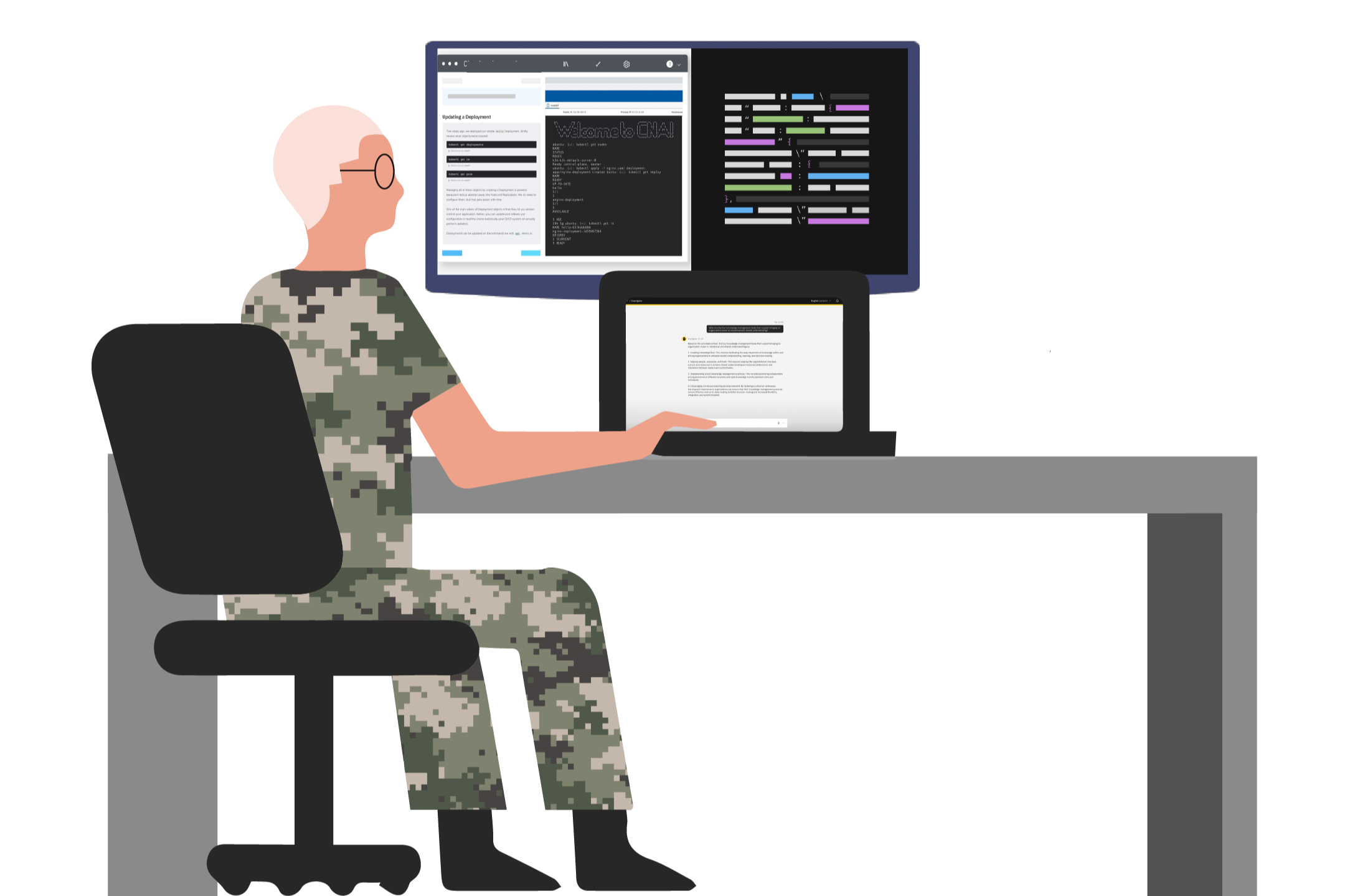

——— CLARITY BY DESIGNCustom Whiteboarding Activities for Complex Domain Discovery

-

Working with KM students, instructors, and other SMEs, we ideated on specific scenarios that would provide value. My intent was to flesh out details to put into dialog flows with our AI engineers.

-

I mapped the scenarios from Step 1 to required system functionality and identified any additional data sources and metadata needed to make retrieval reliable.

-

Engineers prioritized scenarios by feasibility to identify high-impact, low-effort work; those items became the initial user stories for sprints.

——— BUILD STAGEEngineering meets

domain expertise

The technical team began building. They ingested over 140 documents and implemented RAG (Retrieval-Augmented Generation) workflows to address user questions in natural language.

But we quickly realized raw document ingestion wasn't enough — the content was deeply specialized, and the AI needed more context to be useful.

I proposed weekly one-hour whiteboarding sessions with KM subject matter experts to give the system the context it really needed.

——— CLARITY BY DESIGNCustom Whiteboarding Activities for Complex Domain Discovery

-

Working with KM students, instructors, and other SMEs, we ideated on specific scenarios that would provide value. My intent was to flesh out details to put into dialog flows with our AI engineers.

-

I mapped the scenarios from Step 1 to required system functionality and identified any additional data sources and metadata needed to make retrieval reliable.

-

Engineers prioritized scenarios by feasibility to identify high-impact, low-effort work; those items became the initial user stories for sprints.

——— BUILD STAGEEngineering meets

domain expertise

The technical team began building. They ingested over 140 documents and implemented RAG (Retrieval-Augmented Generation) workflows to address user questions in natural language.

But we quickly realized raw document ingestion wasn't enough — the content was deeply specialized, and the AI needed more context to be useful.

I proposed weekly one-hour whiteboarding sessions with KM subject matter experts to give the system the context it really needed.

——— CLARITY BY DESIGNCustom Whiteboarding Activities for Complex Domain Discovery

-

Working with KM students, instructors, and other SMEs, we ideated on specific scenarios that would provide value. My intent was to flesh out details to put into dialog flows with our AI engineers.

-

I mapped the scenarios from Step 1 to required system functionality and identified any additional data sources and metadata needed to make retrieval reliable.

-

Engineers prioritized scenarios by feasibility to identify high-impact, low-effort work; those items became the initial user stories for sprints.

——— USABILITY TESTINGTwo cohorts.

Two rounds. Real data.

2

ROUNDS OF TESTING2

STUDENT COHORTSn=8

SAMPLE SIZE

Example of a page from a report visualizing the study results in graphical form.

STEP 1Test Execution

Moderated sessions with KM students using 4 scenarios and general feedback questions.

STEP 2Qualitative and quantitative responses coded and analyzed post-session.

Code Results

These iterations really improved the value of the solution and surfaced many ideas from end users for broader application and scale.

STEP 3Share with Team

Findings presented with actionable insights for engineers to address in subsequent sprints.

STEP 4Executive Playback

Client quotes and metrics condensed into a final playback for executive leadership.

——— IMPACTMeasurable results.

Real Students. Real time.

40%

Reduction in time students spend researching information

20%

Increase in student effective learning outcomes, (Increase in test scores).

44k+

Attendees at AUSA where this tool was demonstrated

Increased students' confidence during training and improved their competency to perform tasks quickly and more effectively.

Allowed students to learn more in the same amount of time

Standardized the knowledge research process and added explainability to how information was surfaced and used.

Enabled document scanning to detect duplicate information — reducing vetting time and improving accuracy, quality, and timeliness of searches.